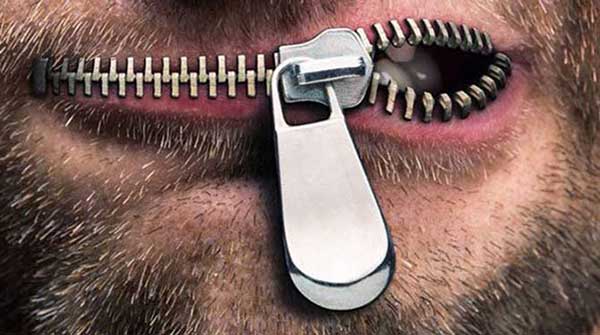

On May 21, the federal government unveiled a lengthy “digital charter” with the noble goals of expanded Internet access and more trust online. If you peel back the feel-good 10 principles and stated justifications, however, you find a new weapon in the censor arsenal.

On May 21, the federal government unveiled a lengthy “digital charter” with the noble goals of expanded Internet access and more trust online. If you peel back the feel-good 10 principles and stated justifications, however, you find a new weapon in the censor arsenal.

“The platforms are failing their users, and they’re failing our citizens. They have to step up in a major way to counter disinformation,” Prime Minister Justin Trudeau said when he announced the charter on May 16. “If they don’t, we will hold them to account and there will be meaningful financial consequences.”

Fines are one way to bend Internet firms, along with broader interpretation and enforcement of hate-speech legislation. One impetus for the charter is the Christchurch Call, a pledge between 18 countries and tech giants Facebook, Twitter, Google, Microsoft and Amazon “to eliminate terrorist and violent extremist content online.” Canada signed the agreement on May 15.

The signatory governments plan to prohibit content “in a manner consistent with the rule of law and international human rights law, including freedom of expression.”

However, in a more than symbolic gesture, the United States declined to join. This is consistent with the First Amendment to the U.S. Constitution, which guarantees free speech – among other rights – as a bedrock of the republic.

There’s no dispute that terrorists weaponize social media to maximize their impact, and social-media firms have a role to play in stopping such abuse. However, politicians and tech firms can also co-opt legitimate concerns to restrict speech, stifle criticism and tilt public debates.

The application of safety protections is already limiting legitimate discussions deemed too extreme by censors.

And forthcoming subsidies for approved media outlets – not to mention CBC’s taxpayer funding – demonstrate how Canadian officials can easily incentivize submissive journalism. Proponents of the complicated digital charter explicitly identify “fake news” as one of their chief targets.

The Christchurch Call global strategy came in the wake of the New Zealand mass murder in March. The terrorist live-streamed on Facebook and then supporters quickly re-uploaded to YouTube and other platforms, straining content-moderation efforts.

Although the Christchurch case was clear, and the platform eliminated the file without state coercion, just who is an who is not an extremist can be in the eye of the beholder. Access Now, an advocacy group for digital rights, has criticized the Christchurch Call for its lack of precision in defining a “terrorist and violent extremist content, a concept that can vary between countries and in some cases can be used arbitrarily to harm human rights.”

There is no shortage of warning examples. In Fiji, a law aimed at online harassment has led to overreach and a chilling free-speech climate. Bangladesh throttled Internet access ahead of elections, while Benin imposed a nationwide social-media blackout. After the latest terrorist attack in Sri Lanka, the government preemptively shut down all social-media platforms to stop rumours.

Venezuela’s dictatorship routinely blocks streaming websites during opposition protests. As part of an arbitrary and wide-ranging secret inquiry into “fake news,” the Brazilian Supreme Court this year censored a story linking one of its members to a corrupt businessman. Russia has criminalized insults against government officials or the state itself and the spreading of “lies.”

We are wrong to believe this happens only in the Third World or in authoritarian countries.

France has empowered judges to order the removal of any content they deem fake during elections. Germany has enacted a law compelling social media to remove “hate speech,” and Human Rights Watch has denounced this as “unaccountable, overbroad censorship” that “should be promptly reversed.” The United Kingdom wants to hold websites liable for “harmful” content posted by users, a proposal similar to Australia’s move to jail social-media executives. The New Zealand government has made it a crime to merely possess the Christchurch terrorist’s manifesto and has pushed the press to limit its coverage of the massacre trial.

These laws and regulations need not be applied extensively to chill speech online and foster a culture of self-censorship. As human-rights activist Yulia Gorbunova has noted, “authorities don’t really need to control millions of users. All they need to do is have a handful of criminal extremism cases to instil fear.”

Bureaucrats who don’t understand the bottom-up nature of the Internet are paving the way for censorship across the world. Online communication has become the norm, and those who believe they will never be a target should consider the social-media accounts already being blocked or suspended.

Heavy-handed moderation affects conservatives, progressives and people who just threaten the status quo, so attempts to paint this as a one-sided problem are wrong.

Further, because there’s no precise definition of extremism, social-media censors continue to err on the side of what’s less likely to get them into trouble with authorities. Companies face little choice if they’re to remain in business, as demonstrated by Google when it got into bed with the brutal Chinese regime.

When all they have is a hammer, everything starts looking like a nail, both for state censors and centralized social-media moderators. The digital charter exacerbates this problem and impedes the private reconciliation already underway between open access and safety protections.

Fergus Hodgson is the executive editor of Antigua Report, a columnist with the Epoch Times and a research associate with Frontier Centre for Public Policy. Daniel Duarte contributed to this article.

The views, opinions and positions expressed by columnists and contributors are the author’s alone. They do not inherently or expressly reflect the views, opinions and/or positions of our publication.